|

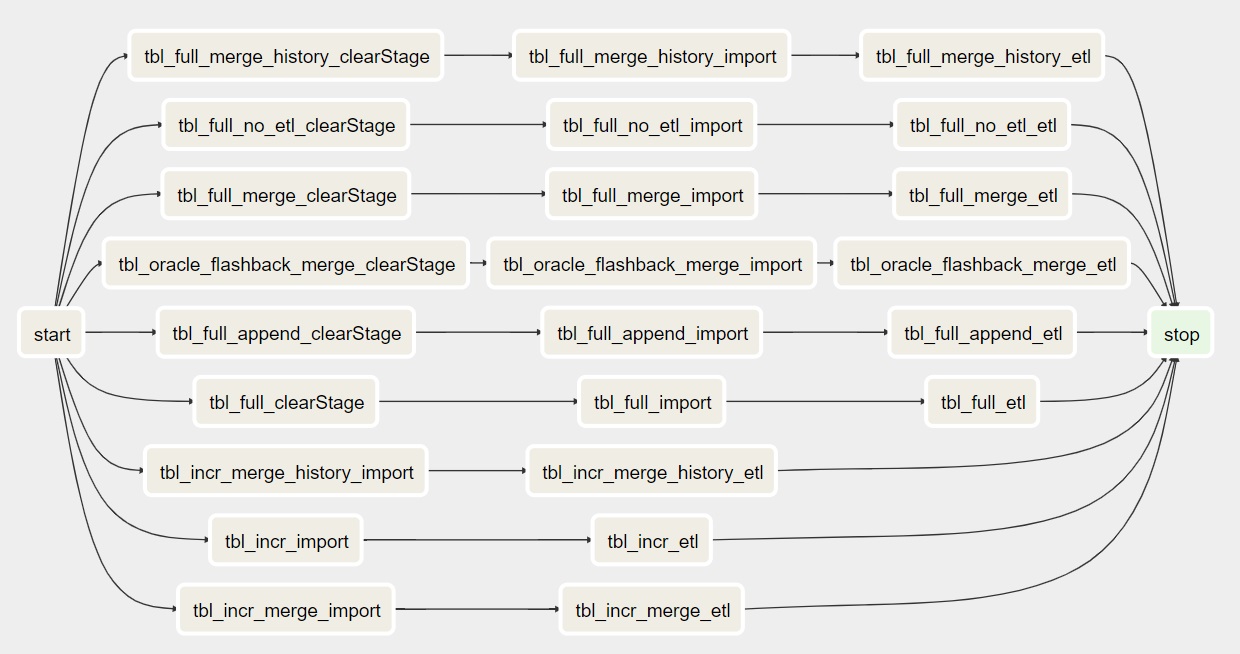

The schedule determines exactly when Airflow runs a pipeline. How are Pipelines Scheduled and Executed in Apache Airflow?Īfter a data pipeline's structure has been defined as DAGs, Apache Airflow allows a user to specify a scheduled interval for every DAG. That enables users to execute tasks across vast systems, including external databases, cloud services, and big data technologies. This has, over the time led to the development of many extensions by the Airflow Community. The flexibility offers great workflow customization, allowing users to fit Airflow to their needs.Īlso, when you create a DAG using Python, tasks can execute any operations that can be written in the programing language. For instance, users can utilize Python code for dynamic pipeline generation based on certain conditions. The advantage of defining Airflow DAGs using Python code is that the programmatic approach provides users with much flexibility when building pipelines. In addition, DAGs Airflow files contain additional metadata that tells airflow when and how to execute the files. Apache Airflow then parses these to establish the DAG structure. Consequently, each DAG file typically outlines the different types of tasks for a given DAG, plus the dependencies of the various tasks. The Python file describes the structure of the correlated DAG. In Apache airflow, a DAG is defined using Python code. How is Data Pipeline Flexibility Defined in Apache Airflow? The Direct Cyclic Graph above lacks a transparent execution of tasks due to the interdependencies between task 3 and task 4. Unlike in the traffic data DAGs Airflow where there is a clear path on how the four different types of tasks are to be executed. In the figure above, task 3 will never execute because of its dependence on task 4. As shown below, this can become problematic by introducing logical inconsistencies that lead to deadlock situations in data pipeline configuration in Apache Airflow as shown below. The acyclic property is significant as it prevents data pipelines from having circular dependencies. Indicate the task that needs to be completed before the next one is executed.Ī quick glance at the graph view of the traffic dashboard pipeline indicates that the graph has direct edges with no loops or cycles (acyclic). The edges direction depicts the direction of the dependencies, where an edge points from one task to another. If we apply the graph representation to our traffic dashboard, we can see that the directed graph provides a more intuitive representation of our overall data pipeline. In DAGs, tasks are displayed as nodes, whereas dependencies between tasks are illustrated using direct edges between different task nodes. By drawing data pipelines as graphs, airflow explicitly defines dependencies between tasks. Many-to-One LSTM for Sentiment Analysis and Text Generation View ProjectĪ data pipeline in airflow is written using a Direct Acyclic Graph (DAG) in the Python Programming Language.

Airflow is an open-source platform used to manage the different tasks involved in processing data in a data pipeline. It is used to programmatically author, schedule, and monitor data pipelines commonly referred to as workflow orchestration. Therefore, we must ensure the task order is enforced when running the workflows.Īpache Airflow is a batch-oriented tool for building data pipelines. For example, analyzing and then cleaning the data won't make sense. Notably, each task needs to be performed in a specific order. We will perform the following tasks:Ĭlean or wrangle the data to suit the business requirements.įrom the above diagram, we can see that our simple pipeline consists of four different tasks. For example, if we want to build a small traffic dashboard that tells us what sections of the highway suffer traffic congestion. Data pipelines are a series of data processing tasks that must execute between the source and the target system to automate data movement and transformation. To understand Apache Airflow, it's essential to understand what data pipelines are. Start Building Your Data Pipelines With Apache Airflow.A Weather App DAG Using Apache’s Rest API.A Music Streaming Platform Data Modelling DAG.Top Apache Airflow Project Ideas for Practice.How are Errors Monitored and Failures Handled in Apache Airflow?.Running Your First DAG in Apache Airflow.Defining and Configuring Your First DAG.Data Pipelines with Apache Airflow - Knowing the Prerequisites.Building Your First Data Pipeline from Scratch using Apache Airflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed